I’ve been debating for a few days about whether I should write about this HMB controversy or not. I’m quick to call out ideas that are suspect or outright wrong (while trying to exercise the principle of charity), but I try to avoid passing personal judgements unless I have all the facts and am sure beyond a reasonable doubt that the people in question are behaving unethically. This is partially because I have an aversion to conflict, but it’s also because I expect the same courtesy and don’t want to be a hypocrite.

Usually, it’s not particularly hard to criticize ideas without criticizing people, but in this case, the idea and the people go hand-in-hand – hence the trepidation about this article. However, given the circumstances, I feel compelled to speak out because I don’t want people to be misled. I’m going to avoid any concrete accusations, but I think you’ll see by the end of this article that the situation addressed here is quite fishy.

Alright, with that vague intro out of the way, let’s get into the meat of the article: Is HMB literally better than (low-to-moderate dose) steroids?

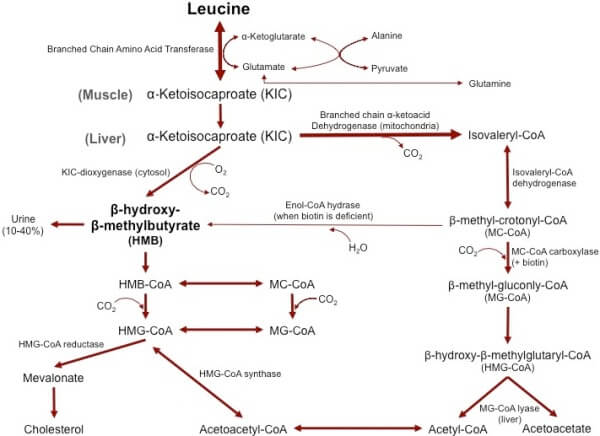

On its face, that may sound like a ridiculous question. HMB (beta-hydroxy-beta-methylbutyrate) is a supplement that’s been around for several years now. It’s a metabolite of leucine, which is the amino acid most associated with triggering muscle growth. It does impact recovery from exercise, and perhaps muscle growth directly, but you wouldn’t expect an amino acid metabolite to have steroid-like effects.

However, a couple recent studies have, in fact, shown that HMB works better than steroids for building muscle. That raises some red flags. Quite a few of my readers who stay up-to-date with the research have asked me about these studies, understandably excited about the prospect of a legal supplement that could get them steroid-like results without any of the side effects or legality issues, and I’m afraid they’re being misled and duped into spending money on something that doesn’t work (or at the very least, doesn’t work as well as they expect it to). That’s why I’m writing this article in the first place.

HMB’s Mechanism of Action

So first things first: What is it, and what does it do?

HMB is a naturally occurring byproduct of leucine breakdown. It behaves similarly to leucine in the body; both independently increase muscle protein synthesis and inhibit muscle protein breakdown, though leucine is better at causing protein synthesis, and HMB is better at inhibiting protein breakdown and also mitigating muscle damage in response to training.

The Early Research on HMB

Because of this, HMB has actually proven to be quite a useful supplement for new lifters. Most studies using untrained and lightly trained lifters (from a variety of labs, some with independent funding and some funded by companies that sell HMB) show benefits of using HMB. When we think about HMB’s primary mechanism of action – inhibiting muscle protein breakdown and decreasing muscle damage – that makes sense.

Most work studying the time course of muscle growth in new lifters finds that not much muscle growth occurs in the first 3-4 weeks of training, after which time, it starts accelerating for a month or two before slowing down again. There are two primary mechanisms to explain this finding:

- With more complex movements, it takes a few weeks for a new lifter to adequately master the movement well enough to put sufficient stress on their muscles. For example, one study employing the squat, bench press, and leg press found that the arms grew rapidly over the first 10 weeks of training, while leg lean mass and trunk lean mass didn’t increase significantly, likely because most people can put a lot of effort into biceps curls from day 1, while it takes a few weeks to start feeling comfortable with the bench press or leg press.

- Some degree of muscle damage and muscle protein breakdown occurs after training in both new lifters and experienced lifters, but there’s a lot more muscle damage and a lot more muscle protein breakdown post-training in new lifters. With that in mind, it makes sense that HMB would be an effective supplement for new lifters. New lifters also get a bigger spike in muscle protein synthesis post-training, but a lot of that goes toward “covering their losses”: making up for the big uptick in protein breakdown and repairing large degrees of muscle damage, instead of building new tissue.

A recent study beautifully demonstrates the second point. When people first started training, they got bigger spikes in muscle protein synthesis, but they didn’t grow very much, likely due to huge increases in muscle damage. Because of that, their increases in protein synthesis weren’t very well-correlated with muscle growth. However, after three weeks of training, muscle protein synthesis post-training had decreased a bit, but rate of growth had increased, and growth was then well-correlated with the increases in muscle protein synthesis because muscle damage had decreased considerably.

With that in mind, it makes sense that HMB would be a very effective supplement for new lifters; if you can cut down a bit on the muscle damage and muscle protein breakdown that are limiting growth, you’d expect them to be able to gain more muscle and strength in their first few months training.

With more advanced lifters, however, the mechanism makes less sense. Yes, you still have a little muscle damage, and yes, muscle protein breakdown increases a bit post-training, but both of those factors play substantially smaller roles. Maybe you’d expect a small effect, but nothing like you’d expect to see in new lifters because the mechanism by which it works gets less and less important the longer you train.

And, until recently, that’s exactly what you saw in the research. Some studies reported no effect and some showed a small benefit. Interestingly, studies on both new and more experienced athletes also showed that HMB gave a slight advantage for fat loss; the magnitude wasn’t particularly large, and I’m not sure how its mechanism of action explains the effect, but the effect shows up consistently enough that I feel comfortable saying that HMB may be beneficial for slight body recomposition effects as well.

Until 2014, the research on HMB “made sense” – a pretty consistent positive effect on muscle and strength in untrained lifters, and a much smaller and less consistent (though still positive) effect for trained lifters. It was the type of supplement that I’d recommend to pretty much any new lifter, and would recommend to advanced trainees who weren’t looking for a night-and-day difference, but who took a “better safe than sorry” approach to supplementation and didn’t mind spending the money.

The Tide Shifts

Then, out of nowhere, this study was published. Understandably, it made some waves.

The group taking HMB gained 7.4kg (16.3lbs) of lean mass over 12 weeks while the group taking a placebo gained 2.1kg (4.6lbs). Meanwhile, the placebo group decreased their body fat percentage by 2.1%, while the HMB group slashed theirs by 6.6%.

Those were certainly some eyebrow-raising results, but even they paled in comparison to the recently published follow-up.

This time around, the group taking a combined HMB and ATP supplement gained 8.5kg (18.7lbs) of lean mass versus 2.1kg in the placebo group, while losing 8.5% body fat versus 2.4% in the placebo group.

Both studies were performed on relatively well-trained lifters (averaging a ~145kg/315lb squat, ~113kg/250lb bench, and ~170kg/375lb deadlift)

In Perspective

On their own, those are some very impressive results. In context, they’re bordering on unbelievable.

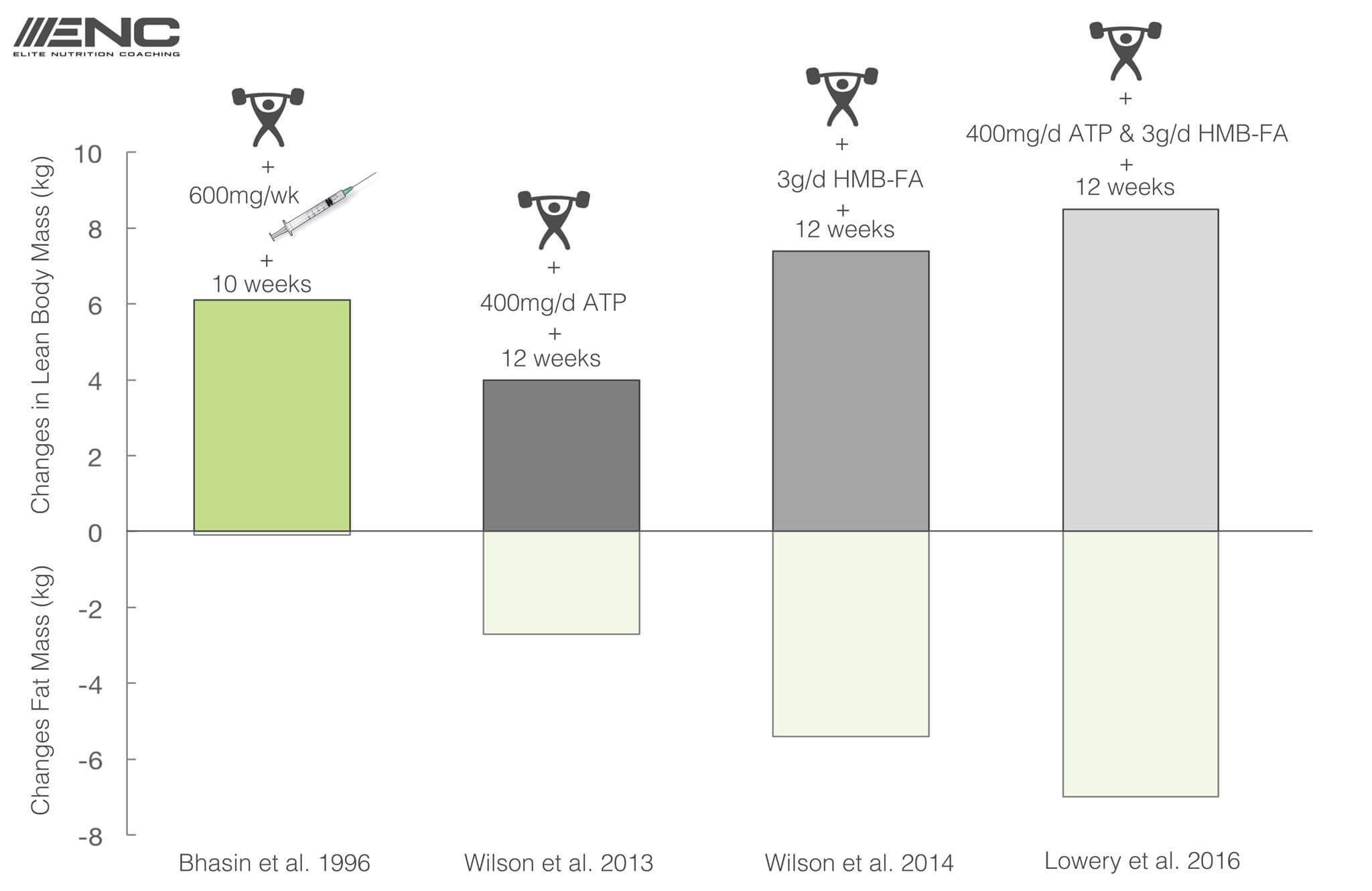

To put the results of these two studies in perspective, let’s compare them to the results of the best study to-date examining the effects of steroids on strength and muscle growth (discussed further here).

The participants in this study and the two HMB studies had similar levels of training experience. The participants in the steroid study benched about 5kg less (97-109kg/215-240lbs), squatted about 31kg less (102-126kg/225-277lbs), and they had pretty similar amounts of lean body mass to start with. They weren’t identical, but they were close enough to make an apples-to-apples comparison.

Over 10 weeks, the group receiving 600mg of testosterone per week added 38kg to their squats and 22kg to their benches, increasing their strength in the two lifts by 60kg (30%) and adding 6.1kg of fat-free mass. The placebo group added 25kg to their squats and 10kg to their benches, increasing their strength in the two lifts by 35kg (15%) and adding 2kg of fat-free mass. The placebo group lost 1.1kg of fat, while the testosterone group only lost 0.1kg.

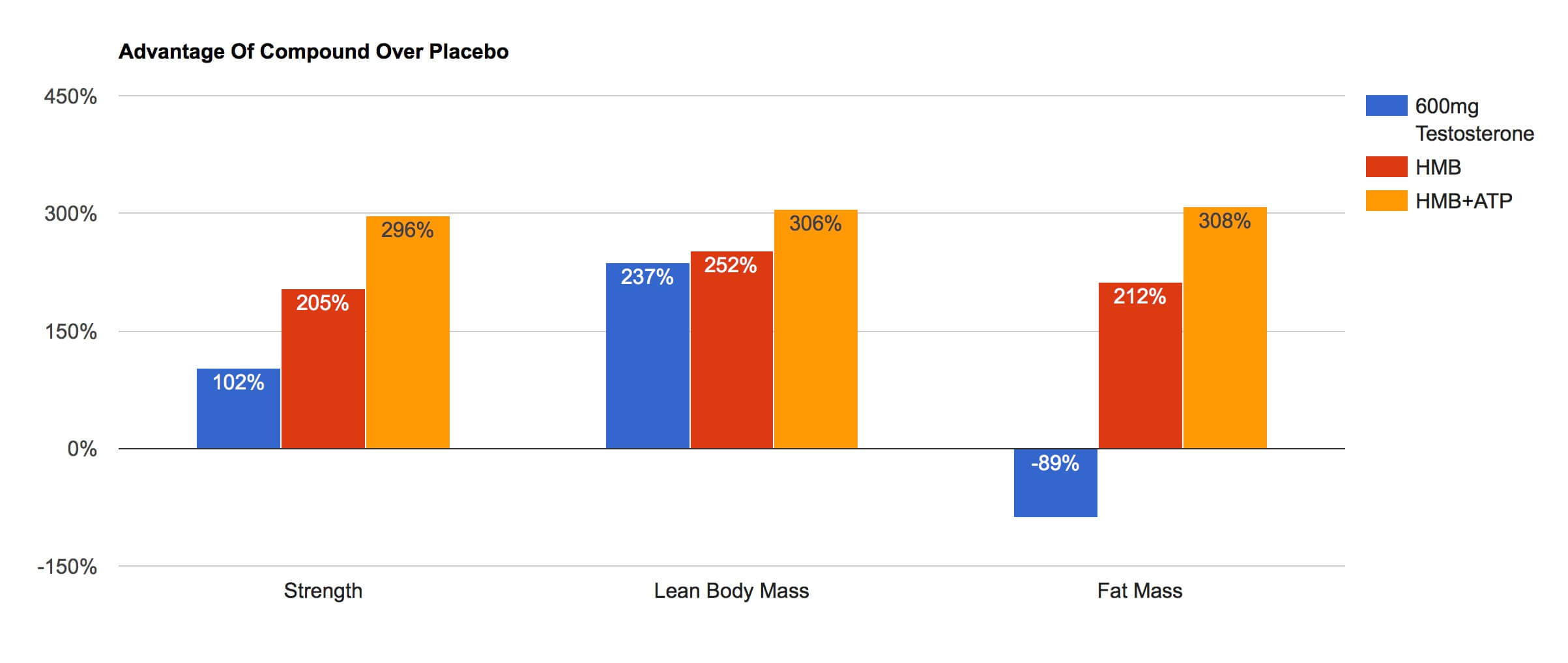

Joseph Agu of Elite Nutrition Coaching made a handy graphic summarizing the results of these studies (I’ll address the ATP study in a moment).

This comparison is interesting, to say the least. A supplement that’s been around for a long time, but which isn’t all that popular, works better than steroids?

The researchers have defended the studies by saying that the key factor is that their participants trained really, really hard. That could certainly play a role. After all, gains in muscle mass and strength tend to increase with training volume. However, I don’t think that tells the whole story here.

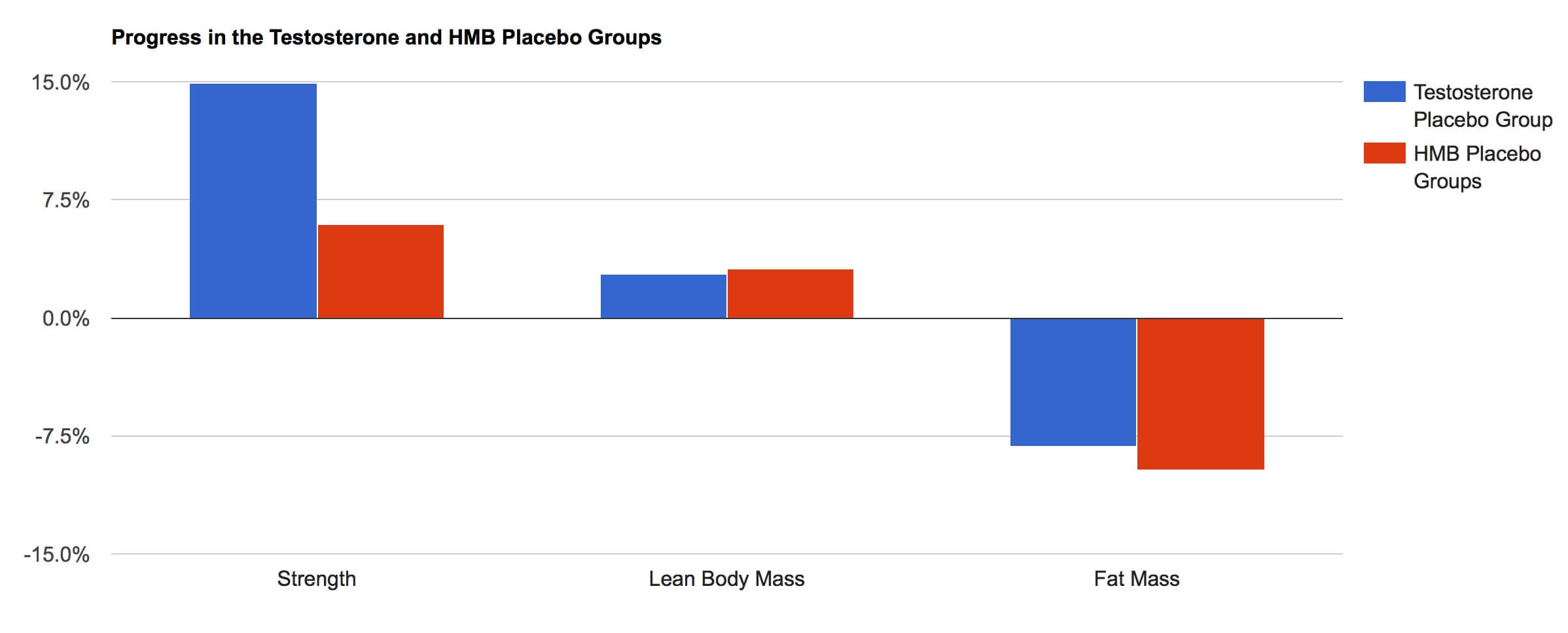

If the results of the HMB groups could be explained by the training program used, you’d expect the placebo groups in the HMB studies to get better results than the placebo group in the steroid study. However, that’s not what you see.

Both HMB placebo groups gained 2.1kg of lean mass while the steroid placebo group gained 2kg, and the steroid placebo group gained more strength; they put roughly 15% on their bench and squat combined, while the HMB placebo groups only added about 6% to their three-lift total (squat, bench, and deadlift). In fact, the placebo steroid group gained more strength on just their squat and bench (35kg) than the placebo HMB groups gained on their squat, bench, and deadlift combined (~25kg).

We can also look at how much the HMB groups “beat” their respective placebo groups versus how much the testosterone group “beat” its placebo group.

The group taking testosterone added about 3.3x as much muscle and 2x as much strength as the placebo group, while the HMB groups added 3.5-4x as much muscle, and 3-4x as much strength. In addition, the HMB groups crushed their respective placebo groups in terms of fat loss, while the placebo group in the testosterone study actually lost more fat than the group taking testosterone.

If anything, this comparison may underplay the degree to which HMB out-performed testosterone. The HMB and placebo groups in their respective studies were very similar in terms of strength and lean mass pre-training, while the placebo group was about 18% stronger and had 6.8kg more lean mass than the steroid group pre-training in the Bashin study; hence, you’d assume they’d have slightly depressed results from being slightly more well-trained initially.

Lest you think that the “problem” in the steroid study was the dose of testosterone used, there was another group of participants in the same study who took 600mg of testosterone per week without training, and they actually gained more lean body mass (3.2kg vs. 2kg) than the group that trained without using testosterone

In short, if you take these studies at face value, taking HMB isn’t just better than injecting a very effective dose of testosterone. It’s a lot better.

Slight Digression: What about ATP?

In the 2014 study, HMB was the only compound that was used. However, an earlier study looked at just the effects of ATP, and the most recent study examined the effects of HMB combined with ATP.

ATP (adenosine triphosphate) is the compound your body uses for almost all of its energy needs. In theory, if you could get more ATP into your muscles, they would be more fatigue-resistant, you’d be able to handle higher training volumes, and you’d make more progress.

However, as a supplement, orally ingested ATP seems to be a non-starter. One study using up to 5000mg of oral ATP (pellets with a coating designed to let it pass through the stomach unscathed) found that ATP levels in the blood didn’t increase due to supplementation. With that in mind, it’s highly questionable that the 400mg/day of oral ATP used in these studies would make it to the muscles in the first place, much less have a noticeable effect (since muscle ATP levels are tightly regulated by various negative feedback loops governing the metabolic pathways your body uses to produce more). Another study (from another lab) bears this out: The ATP group didn’t have a rise in blood ATP levels and didn’t beat the placebo group on any of the acute exercise measures they recorded.

Even if orally ingested ATP could make it into your blood and be taken up by the muscles, it’s questionable whether it would have a huge effect. At best, you could expect results similar to creatine (which works via a similar mechanism, giving your body more readily available energy for intense exercise). That’d be a meaningful improvement, but not 4kg (8.8lbs) of lean body mass in 12 weeks with fat loss to boot (much less 8.5kg of lean body mass when you add HMB into the mix).

(The other proposed mechanism of action is that ATP is rapidly absorbed by red blood cells – which would explain why there’s no increase in blood ATP concentrations – and then aids in vasodilation and blood flow to active tissues when energy demands are high, as you’d see in an exercising muscle. With that in mind, you may expect results more similar to compounds such as citrulline; again, that’s a compound that “works,” but not one that would add 4kg of muscle gain along with 2kg of fat loss in 12 weeks with trained lifters)

HMB-FA: Does the form of HMB make all the difference?

Finally, the last defense I’ve heard for these studies is that they used a new form of HMB. Most HMB supplements come in the form of a calcium salt, with the HMB molecules bound to calcium molecules (HMB-Ca). These two studies, on the other hand, used the free acid form of HMB, with the HMB molecules not bound to calcium (HMB-FA).

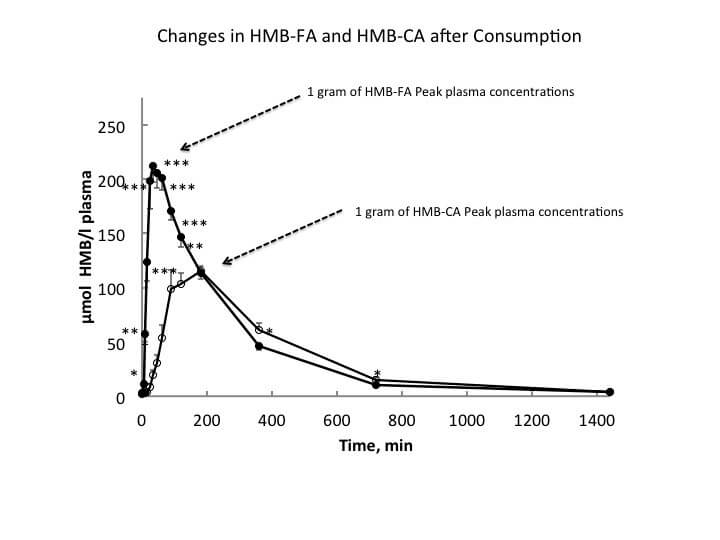

There’s some evidence that HMB is more orally bioavailable as a free acid than as a calcium salt; it hits peak blood concentrations earlier, reaches roughly double the concentration, and the area under the curve (blood concentrations over a long period of time) is almost twice as large.

Is that enough to explain the exceptional results in these two studies?

I don’t think it is.

If it were, then at best you could expect twice the results seen in previous studies. In reality you’d expect less than twice the results, because it’s very rare to see a dose-response curve that’s just a straight line (i.e. if 5g of creatine gets you 20% better results, 500g of creatine isn’t going to get you 2000% better results; the increase in effectiveness generally tends to taper off as you put more and more of a substance in your body). However, that’s a moot concern, because the results in these two studies are substantially larger than those in all previous HMB studies to date with resistance-trained subjects.

The study that sheds the most light on this question doesn’t paint a very rosy picture for the idea that twice the HMB (total HMB in this study, hopefully giving a comparison to double the blood concentrations taking HMB-FA vs. HMB-Ca) would mean substantially more progress. Resistance-trained males were given either 0g, 3g, or 6g of HMB-Ca per day. Not only did the 6g group not experience double the gains, neither of the groups taking HMB got results significantly better than the group taking a placebo. Now, this was a pretty short study (only 4 weeks), but in both of the more recent HMB studies, 4 weeks was more than enough time for significant differences to surface.

Another study on untrained subjects (this one lasting 8 weeks) also doesn’t do much to boost my confidence. Subjects took what roughly equated to 0g, 3g, or 6g of HMB per day (0mg/kg, 38mg/kg, and 76mg/kg) while strength training. At the end of 8 weeks, there were no differences in 1rm strength between the groups, and only the 3g group had an increase in lean body mass (the 0g and 6g groups didn’t). There were some between-group differences in peak isometric torque and peak isokinetic torque (some favoring the 3g group and some favoring the 6g group), but they weren’t anything to write home about. I have a feeling that this study may have been plagued by not having the subjects train hard enough since two of the three groups didn’t see an increase in lean body mass in untrained subjects, but it does reinforce the point that more HMB doesn’t necessarily mean more results.

Is it ENTIRELY unbelievable?

There’s one more study worth mentioning. A 2009 study on HMB with new lifters had results very similar to these two recent studies.

The group taking HMB gained roughly 2x as much strength, gained roughly 2.5x as much muscle, and lost about 2x as much fat as the group taking a placebo. In fact, the HMB group in this study actually gained even more lean body mass than the HMB groups in either of the two new studies: roughly 10kg (22lbs) in 12 weeks. It should also be noted that the supplement being examined in this study included arginine, glutamine, and taurine (which I don’t think would influence the results since none of them has been shown to make a huge difference in body composition, but it’s worth mentioning).

So who knows. Maybe I’m just being overly critical. Maybe HMB is, through some elusive mechanism, better than steroids.

I will say that, at the very least, the 2009 study isn’t TOO out of place among other HMB results in new lifters, though.

One study with untrained lifters found an increase in fat-free mass of 1.2kg with 1.6kg drop in fat in just 3 weeks (similar fat loss and 3x the muscle gained versus placebo).

Another study in adolescent volleyball players (who only dedicated about 15% of their training time to weight training; prior weight training experience wasn’t mentioned) lasting 7 weeks found an increase in fat-free mass of 2.3kg, slight drop in body fat percentage, and strength gains of ~18-30% in the bench press and squat (when normalized to bodyweight) with HMB versus a very slight decrease in fat-free mass, a slight increase in body fat, and strength gains of only 0-7% with a placebo.

If you assumed rate of muscle gain would be similar over 12 weeks, you’d be looking at 4.8kg of lean body mass added in a calorie deficit in the first study, and 4kg of lean mass added with intense training catered to volleyball and fairly little strength training (again, the placebo group actually gained a bit of fat and lost a tiny bit of muscle) in the second study. So, can I buy ~2-2.5x that rate of progress with a dedicated strength training program (unlike the second study) and no calorie deficit (unlike the first study)? At the very least, it seems to be within the realm of possibility. It’s an outlier result, to be sure, but at least it’s in the same zip code as previous studies.

With that backdrop, and with the context that the mechanism of action for HMB actually makes sense here (mitigating muscle damage, with excessive muscle damage being one of the factors that seems to limit muscle growth in new trainees much more so than in more experienced trainees), some pretty crazy gains over 12 weeks is at least within the realm of possibility for new lifters.

I’m skeptical of a study getting results in new lifters that are at least 2-3x better than any previous results when the mechanism of action for the compound in question makes sense. I’m 10x more skeptical about well-trained lifters getting similar results to new lifters (who are already getting better-than-steroids results) when the mechanism of action for the compound in question makes much less sense.

Be Skeptical, Even About Science

There are a few more issues with these two new HMB studies that I could get into (for example, in this one, all of the reported performance measures on week 8 are different between Table 1 and Table 2. Maybe they just used a statistical procedure I’m not familiar with, but I’m not aware of any that let you just change the means). But by this point, it should be pretty clear that I doubt the results of these two studies. I’m not going to cry outright fraud, but I simply can’t believe the results.

I just can’t.

And who knows, the fault could be my own due to a simple lack of credulity. But these two studies’ results are so different from everything that came before them that they raise too many red flags for me. I’ll need to see them replicated by another lab with another batch of trained lifters before they’ll sit well with me.

This raises an important point: Being a skeptical thinker means also being skeptical about scientific findings.

Let me say from the outset that I think science is the best process that we, as a species, have devised so far to systematically answer questions and learn more while attempting to reign in our biases and errors in thinking. But it’s not perfect. There’s a lot of bad science that gets published.

Part of the problem boils down to basic Bayesian probability. For a highly technical treatment of the problem, I’d highly recommend this paper. Here’s the simple explanation:

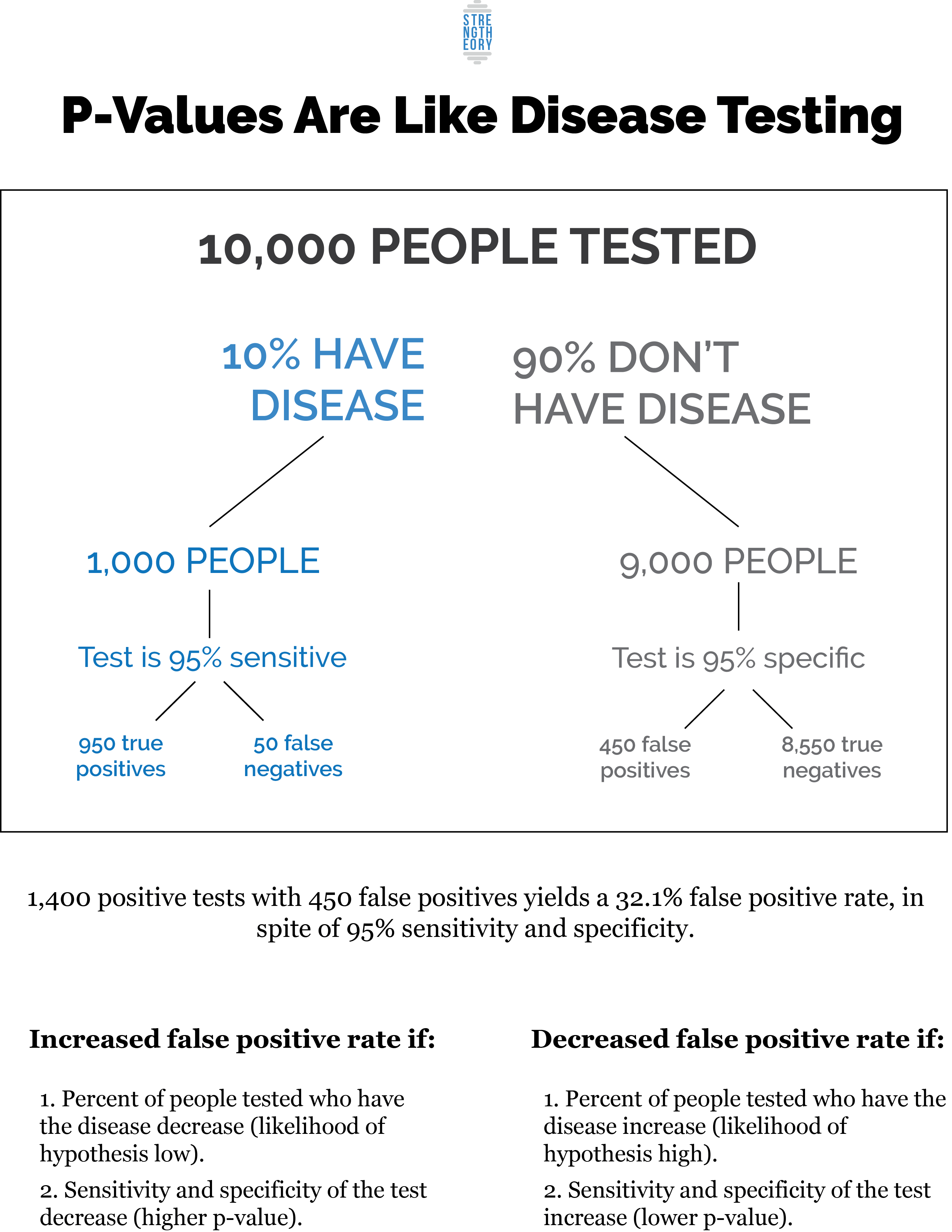

Most scientific research uses p-values. Most people think a p-value tells you how likely it is that a result is “true” or not. For example, if p=0.05, most people think that means there’s only a 5% chance the finding is actually wrong, meaning there’s a 95% chance you have a true finding. However, that’s not what a p-value tells you. It tells you how likely a result is assuming the null hypothesis is true (in exercise science, the null hypothesis is generally “these two exercise programs are equally effective” or something similar).

If you get a small p-value, it may mean that the null hypothesis was false (hooray! Program A is actually better than program B!) or is may mean that you got an unusual sample. So, if Program A produced better results than Program B and p=0.05, it means that there’s a 5% chance that either Program A is actually better than Program B or that you got a weird sample and that both are equally effective, or that Program B is actually better.

If there’s actually no difference between Program A and Program B, or if Program B is actually better but Program A got better results that reached statistical significance, finding statistically significant results in favor of Program A would be called a “false positive.” Most people assume the false positive rate and the p-value are very similar; a p-value of 0.05 means a false positive rate of 5%. In reality, it’s much higher – often around 30% and sometimes 50%+.

How does that work?

Imagine you get tested for a disease. The test is 95% sensitive (if you actually have the disease, the test will catch it 95% of the time) and 95% specific (if you don’t have the disease, the test will only mess up and say you have the disease 5% of the time). It’s a pretty rare disease, but one that doctors frequently test for, so only 10% of the people tested actually have the disease.

Your test comes back and, unfortunately, you tested positive for the disease. Oh no! There’s a 95% chance you have the disease, right?

Not so fast.

If 10,000 people get tested and only 10% of them have the disease, that means 1,000 of the people tested have the disease, and 9,000 don’t.

Of the 1,000 that do have the disease, the test will return 950 positive tests and 50 negative tests, since it’s 95% sensitive.

Of the 9,000 that don’t have the disease, the test will return 8,550 negative tests and 450 positive tests since the test is 95% specific.

So, of the 10,000 people tested, there are 1,400 positive tests, but only 950 of those 1,400 come from people who actually have the disease. Instead of a 95% chance you actually have the disease, there’s only a 67.9% chance, even with a test that’s 95% specific and 95% sensitive.

If we change our assumption and say that only 1% of the people tested actually have the disease, then you’d get 95 positive tests from people with the disease (100*0.95) versus 495 false positives (9,900*0.05). You’d only have a 16% chance of actually having the disease if you tested positive the first time around. On the other hand, if 50% of the people who got tested actually had the disease, you’d get 4,750 positive tests from people with the disease, compared to 250 false positives, giving you 95% chance of actually having the disease.

That’s a simplified version of how p-values work. A significant p-value is the same thing as your test for a disease coming back positive. If most of the people being tested actually have the disease (if you’re asking a research question for which you’re already very confident about the answer), a positive test (a low p-value) means there’s a good chance that you actually have the disease (the statistically significant finding is a true positive, and Program A is actually better than Program B). If, on the other hand, most of the people being tested don’t actually have the disease (if you’re asking a research question where you’re unlikely to uncover meaningful differences), your positive test may still mean you have a pretty low chance of actually having the disease (the statistically significant finding is a false positive, and Program A isn’t actually better than Program B).

Then when you add in the problem of p-hacking (testing for enough different variables that you’re sure to find statistically significant findings, even if most of them will be false positives), you run the risk of error rates being even higher. Here’s an amusing (and telling) account of p-hacking.

Add on top of that the problem of publication bias: Journals are much more likely to publish statistically significant novel results than null results or replication studies. This incentivizes p-hacking (if you can’t come up with some significant findings, your study may not get published, meaning you wasted your time and grant money doing research that won’t advance the field or your career) and the more dubious practice of running a single study multiple times until you come up with a significant result (without publishing the previous null results).

With that in mind, it shouldn’t be a surprise that a lot of “significant” findings aren’t replicable. Most scientists agree that there’s a reproducibility crisis in science right now. For example, in medicine, reports of fewer than 50% of studies replicating are pretty common (here’s a good review).

But doesn’t peer review cut down on all of those practices? Well…not really. On the whole, peer review seems to be pretty ineffective at weeding out bad science.

What about publishing in good journals? Nope. There’s no evidence that “good journals” (those with a high impact factor) are any better at separating the wheat from the chaff.

Then of course, there’s the problem of fraud. Sometimes it’s subtle (and maybe unintentional), like trying out a few different statistical tests until one of them gets you significant results, even if it’s not the most appropriate test for your data. Sometimes it’s a bit more brazen, such as excluding some results based on criteria you didn’t lay out beforehand. Sometimes it’s just wholesale fabrication. It’s impossible to know how often stuff like that happens, but scientists are people too, and some people are just scum bags.

So, with all of that being said, I still have a lot of faith in the scientific process. It’s not perfect, but it’s done a lot of good. (I’m writing this on a computer. My handwriting is so bad that sometimes I can’t even decipher it. Dear science, thank you for computers. Also the moon landing. Also modern medicine – I’m glad I’ve never gotten the measles or died of the flu.) Science also tends to straighten itself out eventually: If you run the disease test scenario a couple more times, the false positive rate plummets precipitously, which means even if a single study is wrong, that finding is eventually rooted out and given less and less credence as replication attempts fail. Moreover, the scientific community is actively working to address the problems I mentioned above; most scientists I know have a strong idealist streak, so when they find out there are fundamental issues with the scientific process itself, they care deeply about trying to fix them.

BUT pulling up pubmed doesn’t mean you can shut off your critical thinking. Be leery of any single result that hasn’t been replicated, because p-values won’t save you. And if you’re going to dive into the science surrounding a specific topic, you’d better be prepared to go all in. When you have a solid grasp of the literature, you’ll have a better idea about whether you can trust the results of a particular study, or whether it’s too good to be true.

Concluding Remarks

I don’t know if the two recent HMB studies are legitimate. In light of the rather unimpressive data to date on well-trained lifters, the fact that the mechanism of action for HMB (and ATP) doesn’t seem to be overly important for well-trained lifters, and with the backdrop of previous results seen with steroids (not to mention the inconsistencies in the data themselves, and other major discrepancies/issues), I can’t personally bring myself to believe the results. I do think, though, that these two studies can be useful for making a point about the importance of thinking critically about things you read and the importance of putting data in the proper context, even if (especially if) you’re reading them in a peer-reviewed journal.

Regarding HMB itself, the recommendation I gave at the beginning of the article holds: I think it’s a great supplement for new lifters, and it may offer some slight benefits for more advanced lifters, though you shouldn’t expect a night and day difference. Since it’s more anti-catabolic than directly anabolic, it may be most useful for more advanced lifters when dieting.

Don’t expect steroid-like results. Time may prove me wrong. HMB-FA may become the supplement that every pro athlete swears by. It may become the top priority on WADA’s list when trying to crack down on performance-enhancing drugs. If that happens, feel free to bring this article back up and tell me I was just a cynic.

I won’t hold my breath.

And with regards to science itself, stay skeptical, be thorough in your reading, connect with people who know more about a particular subject than you do, and stay optimistic. It’s not perfect, but it’s the best we’ve got, and it’s working at getting better.

Featured image credit to Joseph Agu