You may have heard the old adage, “What gets measured gets managed.” This might be used as a justification for the increasingly common desire to utilize wearable technology in order to attain fitness goals. However, you might be surprised to learn that the adage listed above is actually incomplete. The full quote, delivered in the context of business management guidance (although there’s disagreement regarding who actually said it first), reads: “What gets measured gets managed – even when it’s pointless to measure and manage it, and even if it harms the purpose of the organization to do so.” There would of course be considerable advantages conferred from valid, reliable, real-time measurement of energy expenditure data. However, whether or not such tracking is “pointless” or “harms our purpose” comes down to the validity, reliability, and utility of those measurements. If they’re terrible, the act of tracking energy expenditure with wearable devices is pointless at best. If we’re making significant adjustments guided by erroneous data, it might even harm our purpose.

The presently reviewed study sought to evaluate the accuracy of three wrist-worn devices: the Apple Watch 6, the Polar Vantage V, and the Fitbit Sense. 60 young and healthy individuals (30 males and 30 females; age: 24.9 ± 3.0 years, BMI: 23.1 ± 2.7 kg/m2) completed five different activities (sitting, walking, running, resistance exercise, and cycling) for ten minutes each while wearing each of the devices. Heart rate and energy expenditure were continuously measured using the Polar H10 chest strap and MetaMax 3B; these were the criterion (reference) measurements to which the wearable devices were compared.

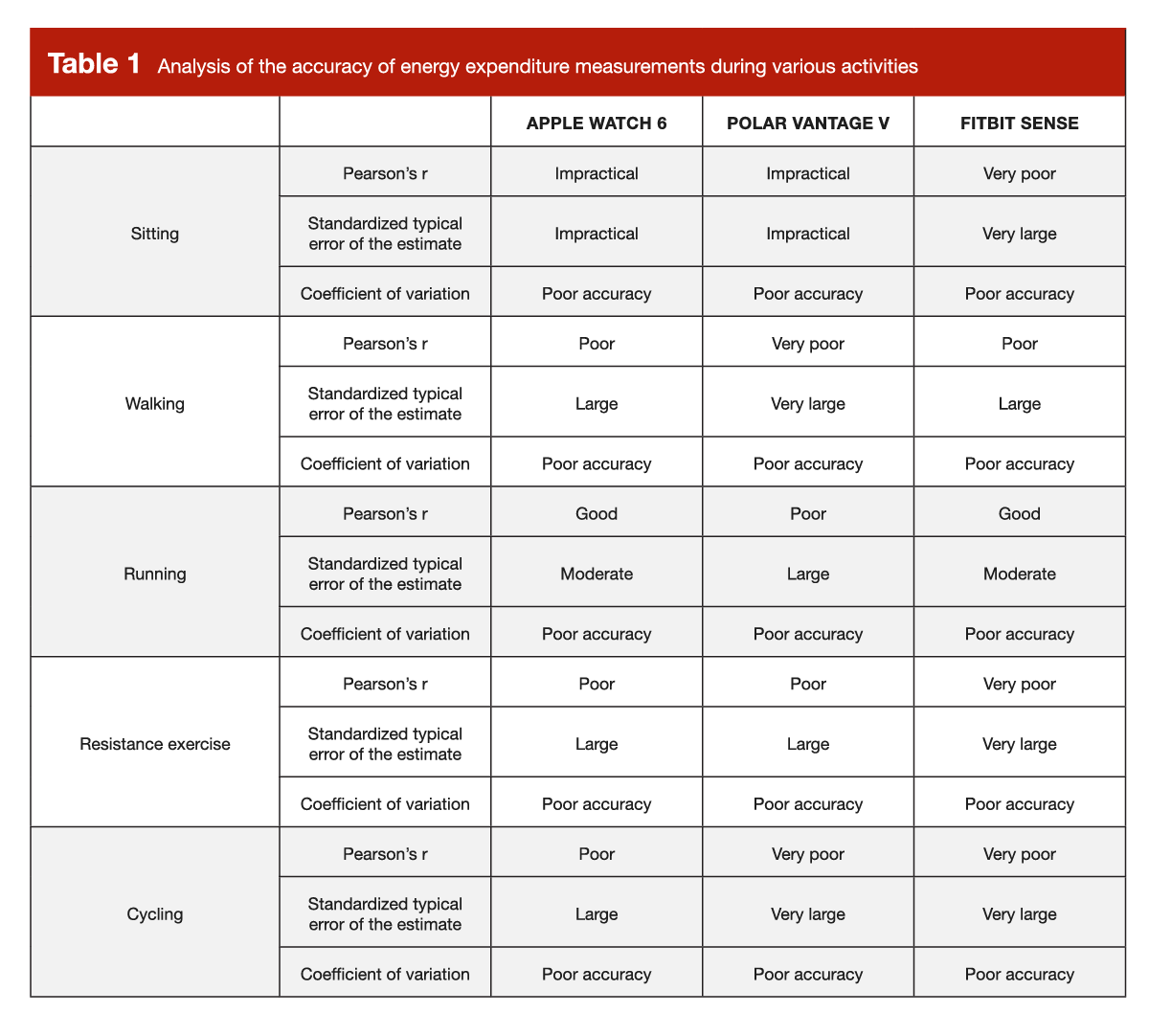

The researchers performed a number of analyses to facilitate device comparison, including Pearson correlations between each device and the criterion measure, standardized typical error of the estimate for each device (a standardized version of “the typical amount by which the estimate is wrong for any given subject”), the coefficient of variation for each device (standard deviation / mean × 100), and Bland-Altman plots for each device (which assess the agreement between devices by plotting the difference between two devices against the average value of both). Pearson correlations were interpreted as ≥ 0.995: excellent; 0.95-0.994: very good; 0.85-0.94: good; 0.70- 0.84: poor; 0.45-0.69: very poor; < 0.45: impractical. Standardized typical error of the estimate values were interpreted as >2.0: impractical; 1.0-2.0: very large; 0.6-1.0: large; 0.3-0.6: moderate; 0.1-0.3: small; <0.1: trivial. Coefficients of variation were interpreted as > 10%: poor accuracy; 5-10%: acceptable accuracy; < 5%: high accuracy. So, just to be clear: a high value would be good for a Pearson correlation, but a high value would be bad for a standardized typical error of the estimate or coefficient of variation.

Unfortunately, the researchers found that these wearable devices were pretty disappointing when it comes to estimating energy expenditure. Given all of the different ways they quantified device agreement and different types of errors, we could drown in a sea of numbers here. However, the quantitative interpretation of these numbers isn’t particularly intuitive, and I don’t want us to miss the forest for the trees. So, I have adapted a table to concisely summarize the energy expenditure results using the authors’ own categorized criteria for interpreting the values (Table 1).

As can be seen in Table 1, all three devices did quite poorly when aiming to estimate energy expenditure during various types of activity. The researchers also constructed a number of Bland-Altman plots; it would be a bit excessive to include them all here, so I will summarize them. It was fairly common to see mean bias values relatively far from zero (indicating that there is general disagreement between methods, on average), very wide limits of agreement (reflecting high variability in the magnitude of disagreement), some very big outliers (suggesting that estimates were very, very bad for some specific individuals), and some instances of proportional bias (indicating that disagreement systematically differed among people with lower-than-average energy expenditure and people with higher-than-average energy expenditure). In short, the estimates were pretty bad, but not in a way that would be easily predictable. If a device consistently overestimates everyone’s energy expenditure by 100kcal/day, it’s technically wrong, but still quite useful. However, when you’re looking at large errors with a great deal of variability and some fairly substantial outliers, it’s hard for an individual user to confidently act upon the estimate they receive.

I assume that many people view their energy expenditure estimates from wearable technologies as somewhat imperfect estimates that should be interpreted with some degree of caution, but these data reflect much more than a functionally negligible rounding error or a consistent magnitude and direction of error that can be easily adjusted for. As such, the researchers stated: “Collectively, based on these findings, we would suggest that evaluating energy expenditure using these 3 wrist-worn devices does not provide an acceptable surrogate method for the estimation of energy expenditure in research studies.” Based on the data, it’s hard to argue with them, and they’re certainly not the first group to reach this type of conclusion – previous systematic reviews by Fuller et al and Evenson et al concluded that commercially available wearable devices estimated energy expenditure with insufficient validity.

Having said that, all is not lost. There are plenty of folks who do various types of endurance exercise and find heart rate data to be quite helpful. The presently reviewed study found that the Apple Watch 6 did a pretty good job of tracking heart rate, whereas the heart rate accuracy of the other two devices varied depending on the type of activity being performed. So, if you were interested in using a wearable device to track your heart rate during endurance exercise (or incorporate heart rate-based exercise prescriptions), the Apple Watch 6 would probably get the job done. In line with this finding, previous systematic reviews by Fuller et al and Evenson et al have reported that certain wearable devices (but not all) are pretty effective for heart rate tracking.

In addition, we have previously discussed the many benefits of striving for higher daily step counts, and these systematic reviews also reported that certain wearable devices do a pretty nice job tracking step counts. So, getting back to the old adage at the beginning of this research brief: the point is not that wearable devices are “pointless,” but they may “harm our purpose” if we place too much confidence in their energy expenditure estimates. If you’re altering your calorie intake in direct response to estimates from a wearable device with questionable validity, you might be chasing an inaccurate number that could be leading you astray. The available research suggests that many wearable devices tend to do a pretty poor job of estimating energy expenditure and sleep metrics, but some may be pretty valid when it comes to measuring heart rate and step counts. I say “may” because the relative validity and reliability of each specific device must be assessed independently, with some models performing substantially better than others.

Rather than using a wearable device to obtain an estimate of your daily energy expenditure, you might be better off with an approach that focuses on patiently and consistently observing your daily energy intake and fluctuations in body weight. Body mass changes reflect changes in the total metabolizable energy content of the body, which draws a direct mathematical link between body composition and energy balance. As I previously wrote elsewhere, “All you need to do is accurately track your body weight every morning and your daily energy intake, and you can identify the calorie target required to meet your goal. If you’re trying to maintain body weight, then you’re trying to find the calorie target that keeps your weight stable.” Of course, if you’re trying to achieve a particular rate of weight gain or weight loss, the same principle applies.

The primary downside to this approach is that changes in sodium intake, carbohydrate intake, hydration status, and the bulk of food in our gastrointestinal tract can cause some day-to-day fluctuations in body weight that can make it hard to determine which weight fluctuations are “signal” and which are “noise.” You could keep yourself very busy developing spreadsheets or algorithms that use different smoothing, weighting, and adjustment techniques to sort out this variability and tighten up your estimates (see here), but the level of precision you wish to pursue is all up to you.

When writing about scientific topics, my general aim is to share robust conclusions that are likely to stand the test of time, with no bias related to “wanting” any specific outcome. However, this particular topic is an exception – I hope (and expect) that wearable devices will eventually get better at energy expenditure estimation, so studies describing their current estimation errors are sure to be outdated in the near future. It remains to be seen if these devices will become valid and reliable enough to independently inform dietary intake (in the absence of additional adjustments or algorithmic inputs). For now, the commercially available wearables that have been tested in the peer reviewed literature come up short. Wearables can be great for measuring and tracking other physiological metrics (such as heart rate and step counts), but patient and consistent tracking of changes in energy intake and body composition is currently our best option for making inferences about energy expenditure and energy balance.

This Research Spotlight was originally published in MASS Research Review. Subscribe to MASS to get a monthly publication with breakdowns of recent exercise and nutrition studies.

Credit: Graphics by Kat Whitfield.